Responsible AI in Hiring: Principles, Governance, and What Real Implementation Looks Like

Updated : 2 hours ago

In 2024, AI-powered hiring tools processed over 30 million applications and triggered hundreds of discrimination complaints in the same period. Fast adoption without governance is not progress. It is liability.

AI in hiring is no longer experimental. It is operational, regulated, and increasingly scrutinized. This guide covers what responsible AI actually means in a hiring context, what the research says about current risks, what 2026 regulations now require, and what genuine responsible AI implementation looks like at the product level.

AI Adoption in HR Has Outpaced Governance

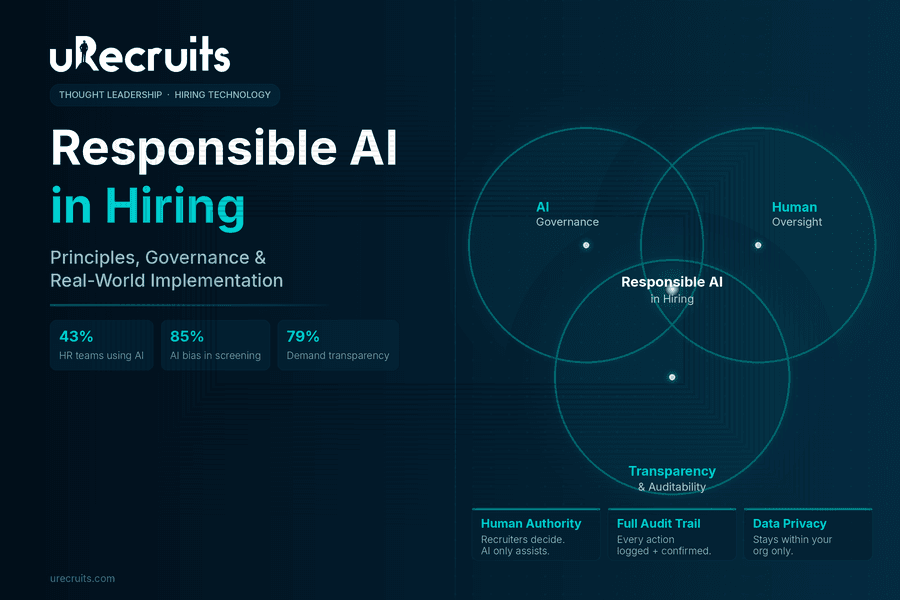

SHRM's 2025 Talent Trends research found that 43% of organizations now use AI for HR tasks, up from 26% in 2024, nearly double year-over-year. SHRM's 2026 State of AI in HR report shows 92% of CHROs expect AI integration to grow further this year.

Adoption alone does not mean governed adoption. HR Defense's 2025 compliance analysis documented that AI-powered hiring tools processed over 30 million applications in 2024 while triggering hundreds of discrimination complaints in the same period. Fast rollout without a governance framework is where the liability exposure comes from.

What Is Responsible AI? The Working Definition for Hiring

Responsible AI, sometimes referred to as responsible artificial intelligence, refers to artificial intelligence systems that are transparent in how they operate, subject to meaningful human oversight, free from unchecked bias, and accountable through reviewable records. In hiring specifically, this translates into four concrete requirements:

- AI criteria must be visible and team-defined, not hidden inside platform logic.

- Human authority over final hiring decisions must be preserved and enforced at the product level.

- Candidate data must be handled within clear privacy boundaries.

- Every AI-assisted action must be logged in a way that can be reviewed later.

Research published in Nature's Humanities and Social Sciences Communications identified the core problem with systems that do not meet this bar: without transparency, algorithmic biases are difficult to fully understand because their techniques and methods are not easily visible. Candidates end up with no explanation for how they were evaluated. Organizations have no audit trail to defend or improve their process.

Responsible AI closes that gap, not by removing AI from hiring, but by structuring how it operates.

The Documented Bias Problem

Any organization deploying AI in hiring needs to understand what the research says about how current tools perform across demographic groups.

85% The rate at which leading AI models favored white-associated candidate names, even when resumes were otherwise identical. Source: University of Washington, 550+ real-world resumes tested

A University of Washington study tested three leading large language models across over 550 real-world resumes, varying only the candidate names to signal different racial and gender identities. Black male-associated names were never preferred over white male-associated names, even with identical qualifications.

A separate study covering approximately 361,000 simulated resumes across five leading AI models confirmed something more complex: leading AI models systematically favored female candidates while disadvantaging Black male applicants, even when qualifications were held constant. The bias patterns were not uniform; they operated intersectionally.

Brookings Institution recommends broader support for independent auditing of hiring AI systems, transparency requirements when automated systems make adverse decisions, and scrutiny that applies to human-AI collaboration just as much as to fully automated systems. Adding a human reviewer at the end does not fix a biased screening process upstream.

45% Reduction in biased hiring decisions when human oversight is genuinely integrated with AI, versus AI operating alone. Source: Lewis Silkin and Ribbon AI research

Oversight that only reviews the final shortlist, without touching the criteria and logic driving the screening, captures far less of that benefit. The keyword is "combined."

What Candidates Expect from AI-Assisted Hiring

79% / 67% Of candidates want transparency when AI is used in their hiring process. 67% accept AI screening, as long as a human makes the final call. Source: HireVue candidate perception research, via Truffle

Candidates are not resisting AI. They are asking for two specific things: tell them it is being used, and keep a human accountable for the outcome. Platforms that satisfy both earn more trust. Those that operate as black boxes erode candidate confidence regardless of how efficient the backend has become.

The Regulatory Landscape in 2026

Responsible AI governance in hiring has moved from best practice to legal requirement across multiple jurisdictions. Organizations that have not yet built a formal responsible AI policy for hiring are now facing regulatory deadlines, not just ethical expectations.

New York City Local Law 144 has been in force since 2023. It requires annual independent bias audits of any automated employment decision tool, advance notice to candidates, and public posting of audit results.

California's Civil Rights Council regulations, effective October 2025, require meaningful human oversight of any automated decision system used in employment, with someone trained and empowered to override it. Employers must proactively test for bias and retain records for at least four years. Vendor liability is explicitly included.

Illinois amended its Human Rights Act to require transparency disclosures when AI is used in employment decisions and banned using ZIP codes as proxies for protected characteristics.

Colorado's AI Act, effective June 30, 2026, classifies employment AI as high-risk, with obligations around risk assessment, documentation, and algorithmic discrimination mitigation.

The EU AI Act classifies hiring AI as high-risk regardless of where the vendor is based, relevant for any organization with global or remote hiring pipelines.

The direction is consistent across all jurisdictions: human oversight is mandatory, bias testing is mandatory, documentation is mandatory. Organizations whose platforms cannot demonstrate all three are already behind.

Responsible AI Principles That Apply to Hiring

The responsible use of AI in hiring requires these five principles to work in combination. Individual commitments without the others leave meaningful gaps in governance.

Human authority over consequential decisions. Recruiters and hiring managers must decide who gets interviewed, who advances, and who receives an offer. This is not a philosophical position. It is a legal one, enforced from the EEOC to the EU AI Act.

Transparent, team-defined criteria. If a platform surfaces or ranks candidates, the criteria behind that output should come from the hiring team, not opaque platform scoring models. Hidden logic means hidden liability.

Standardized evaluation to reduce bias entry points. Catalyst's 2024 research on structured interviewing documents how unstructured processes allow subjective impressions to override qualifications. Research cited by Alva Labs puts structured interviews at twice the predictive validity of unstructured ones for job performance.

Privacy boundaries. Candidate data collected for one organization's hiring should not be used to train models that benefit other organizations. In most regulatory jurisdictions, this is not just an ethical standard. It is a legal one.

Auditability. AI-assisted actions need to be logged with enough context to reconstruct what happened: what the system proposed, whether a human confirmed or cancelled it, the timestamp, and the result.

How uRecruits Implements Responsible AI

uRecruits approaches responsible AI as a product design problem, not a policy exercise. For hiring teams evaluating responsible AI solutions, the distinction that matters is whether governance is built into the platform architecture or only described in documentation. At uRecruits, it is built in.

The foundational principle: AI handles administrative and structural tasks. Humans retain authority over hiring decisions. That boundary is built into the product, not stated in a policy document.

"The AI agent category is growing fast. What we are building at uRecruits is an agent designed specifically for hiring, with one deliberate constraint that most agent builders are not imposing on themselves: it asks before it acts. In hiring, that constraint is not a limitation. It is the product."

— Thomas Alexander, Founder & CEO, uRecruits

The uR Agent handles resume parsing into structured candidate profiles, surfacing matches based on hiring team-defined criteria, interview scheduling, and workflow coordination, all within confirmation-based interaction paths. Who gets interviewed, who advances, and who receives an offer remains with the recruiter and hiring manager.

Transparency: Candidate ranking reflects criteria set by the hiring team. Resume parsing is visible throughout. Recruiters control evaluation criteria from start to finish.

Bias reduction: Standardized interview stages applied consistently across all candidates. Shared evaluation scorecards keep assessment criteria visible to the whole team. Interview feedback is documented, creating a reviewable record for every candidate.

Privacy: Each AI component receives only the data needed for its specific task. Candidate data stays within the organization's boundary. uRecruits does not use candidate data to train AI behavior across other organizations and does not sell it to third parties.

Auditability: AI-assisted actions are logged as first-class events, with user, timestamp, action type, proposed action, confirmation status, and result, the same structure as human actions.

Questions to Ask Before Deploying Any AI Hiring Tool

On transparency: What criteria drive candidate ranking? Can your team see and modify them? Are candidates informed AI was involved?

On human authority: Does the platform require human confirmation before AI actions complete? Is there a clear record separating AI proposals from human decisions?

On bias: Has the system undergone independent bias audits? Are those results shareable? Does the platform train on your historical hiring data in ways that could replicate past patterns?

On privacy: Does candidate data stay within your organization's boundary? Is it used to train AI features across the vendor's other clients?

On auditability: Can you access a full log of AI-assisted actions, including cancelled ones, and for how long are records retained?

Vague or evasive answers to any of these are themselves useful data points.

Try Responsible AI Hiring with uRecruits

The research in this guide points to one consistent conclusion: AI makes hiring faster, but human authority is what makes it defensible. uRecruits is built around that principle. The uR Agent handles the structure: resume parsing, candidate matching, scheduling, and workflow coordination, while recruiters keep full authority over every decision that affects a candidate's outcome.

See it in a real workflow. Book a demo or start a free 30-day trial. No credit card required.